Anthropic SDK 0.100–0.102: What to Delete on Cloudflare

A migration playbook for senior devs running production AI on a Durable-Object-plus-Workflows stack. Maps every piece of the Managed Agents API that landed in SDK 0.100–0.102 to the Cloudflare primitive it replaces, surfaces the three…

The Anthropic Python SDK shipped 0.100.0 on May 6, then 0.101.0 on May 11, then 0.102.0 on May 13 – three releases in eight days that turned Managed Agents into a hosted runtime with outcomes, webhooks, and multiagent orchestration baked in. I went through my own 6-Durable-Object stack on Cloudflare looking for what client.beta.managed_agents.sessions.create() replaces, and the honest answer is: about 280 lines of retry-and-poll code in two workflow steps. The rest stays.

What actually shipped in SDK 0.100 to 0.102

Three releases, eight days, and the surface area of the Python SDK changed more than it had in the previous six months. The dates matter because anyone pinned to anthropic==0.99.x in production missed the entire Managed Agents runtime in one sprint.

v0.100.0 (May 6, 2026) added multiagents support, outcomes support, webhooks support, and vault validation to the beta namespace. The shape that matters: client.beta.managed_agents.sessions.create(thread=..., outcome=..., metadata=...) returns a session_id and runs async on Anthropic's infrastructure. You stop owning the orchestration loop.

v0.101.0 (May 11, 2026) added the AWS client for Claude Platform on AWS and updated every cookbook example to claude-sonnet-4-5-20250929. If you're on Bedrock, this is the release you need: ANTHROPIC_BEDROCK_SERVICE_TIER env var support (default/flex/priority) landed here, not in 0.100.

v0.102.0 (May 13, 2026) added BetaManagedAgentsSearchResultBlock types, cache diagnostics for the prompt cache beta, and Pydantic iterator eager validation. The block-type surface is still moving, which is the single biggest reason I'm not migrating my multiagent code yet.

The headline feature is outcomes. You write a rubric, a separate evaluator agent grades the result against it, and the agent retries until it passes. Anthropic's internal eval claims up to +10 points on task success over standard prompting loops, +8.4% on docx generation, +10.1% on pptx. That number tracks with what I see in my own Critic Durable Object: a single re-prompt with judge notes is worth roughly one quality tier of model upgrade.

The other half is webhooks. Eight session events fire to a URL you register in Claude Console: session.status_run_started, session.status_idled, session.status_rescheduled, session.status_terminated, session.thread_created, session.thread_idled, session.thread_terminated, and the one that matters most, session.outcome_evaluation_ended. The signing secret is whsec_…, shown once at creation. You verify with client.webhooks.unwrap(payload, signature, secret).

Multiagent orchestration also shipped, but behind a research-preview access request. I'll come back to why I'm not flipping that switch.

What this means for non-technical founders

Anthropic now runs the agent for you. You write the rubric ("did the article hit 2,000 words, cite three primary sources, and pass the de-AI regex?"). Anthropic's evaluator agent grades the output and re-runs the agent until it passes. Your engineer stops writing the retry loop.

Two effects on cost. First, fewer hand-engineered retry loops in your codebase, which means less senior-engineer time spent maintaining them. Second, more retries inside Anthropic's session, each charged at $0.08 per session-hour on top of tokens. Whether that's a net win depends entirely on how long your sessions run.

The honest framing: if your team is shipping a v1 agent product, Managed Agents replaces roughly 40% of the orchestration code your senior engineer would otherwise build. If you've already shipped one, it replaces a smaller slice, but the webhook side eliminates the polling loop you almost certainly have somewhere.

What to ask your CTO this week: "Are we still polling for completion, or have we switched to session.outcome_evaluation_ended yet?" If the answer is "polling," that's a half-day refactor that drops infrastructure cost and request latency in one move.

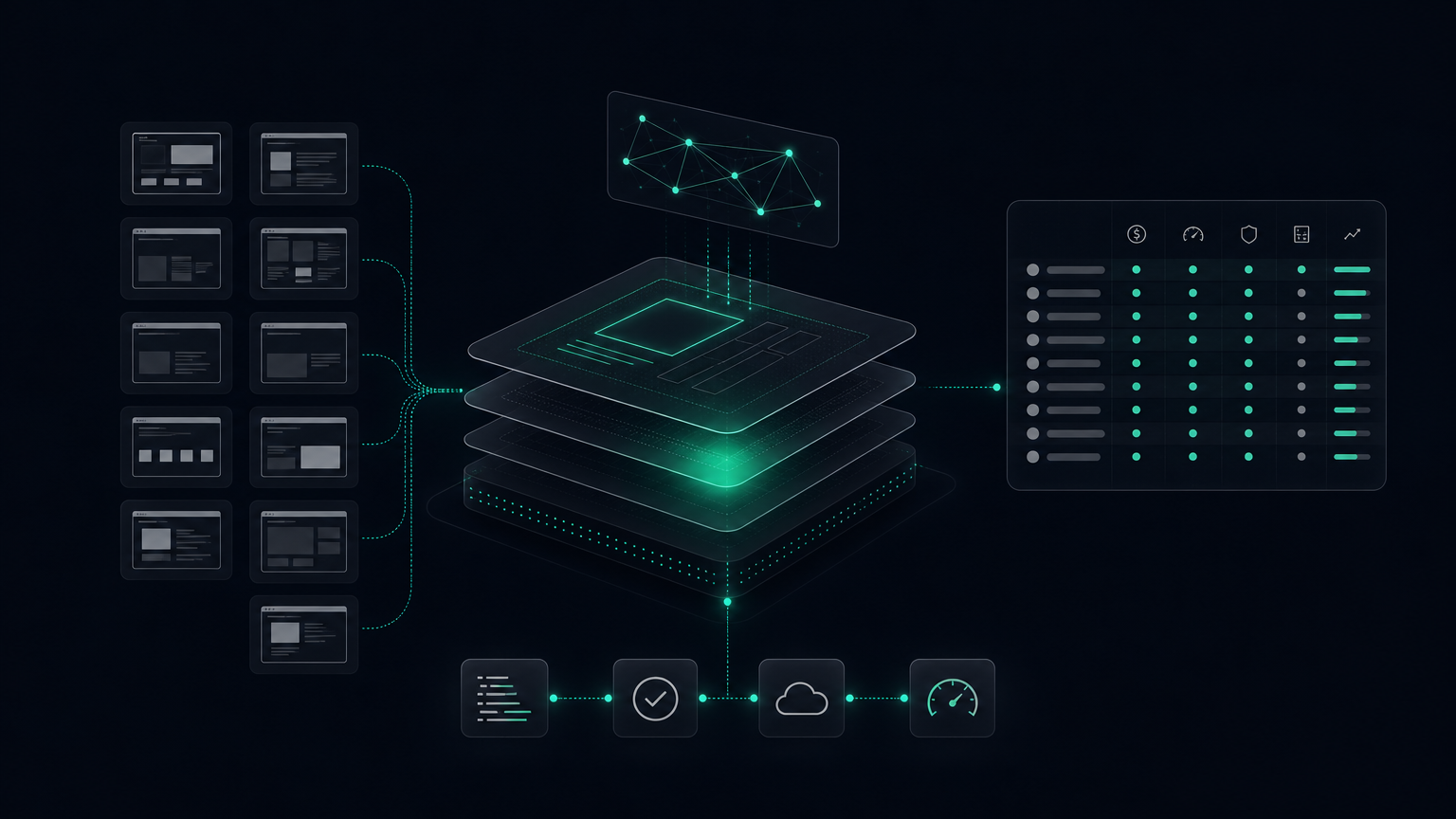

The Cloudflare stack I'm running today

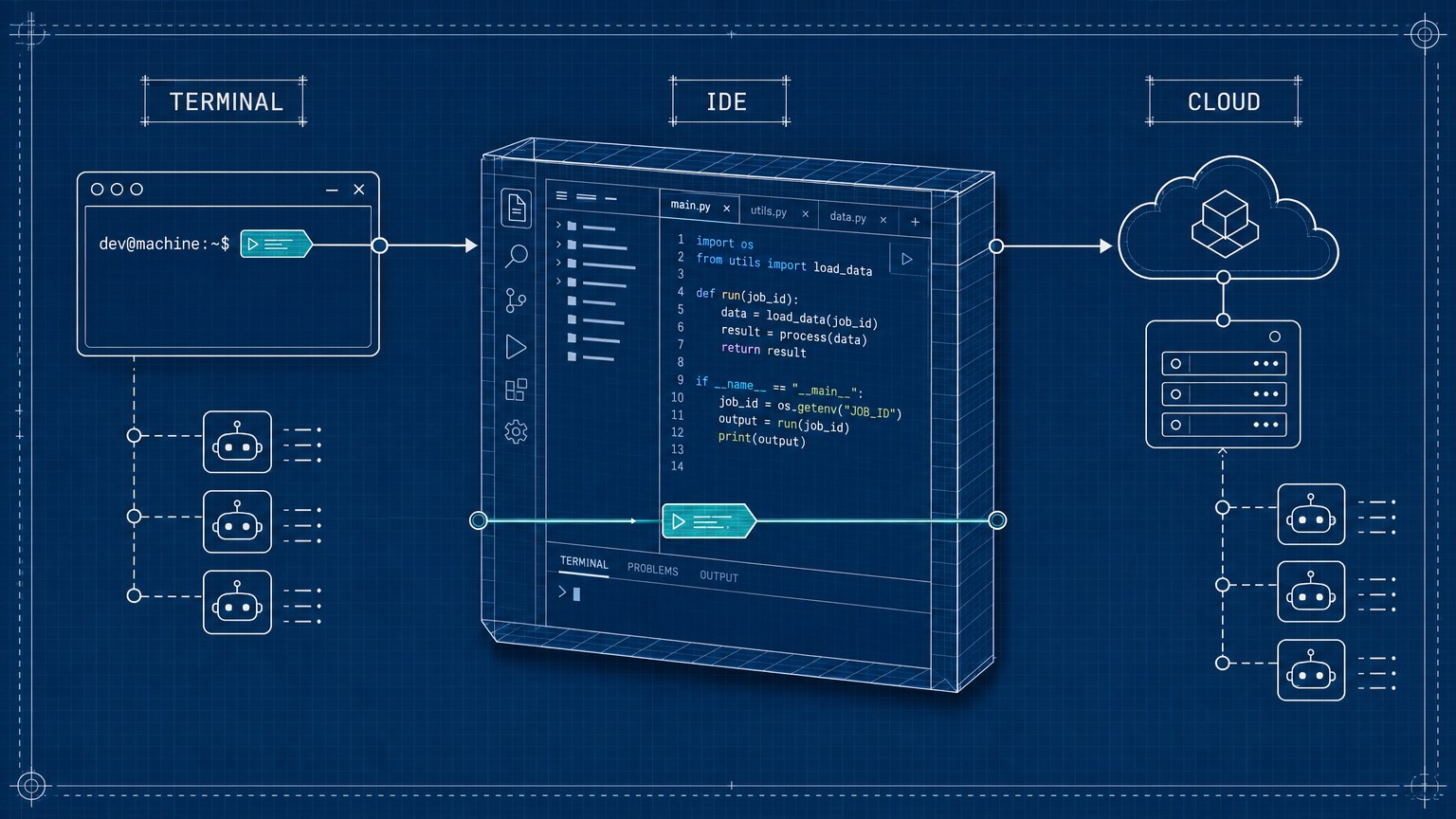

For context on what gets deleted, here's the architecture: a single Hono worker on Cloudflare Workers (ES2021 target, Static Assets binding) fronts six Durable Objects, one per publisher routine. Editorial, Discovery, Writer, Distribution, Maintenance, Manager – plus a seventh, ManualIntake, that backs the admin UI.

Each routine fires through one of six Cloudflare Workflows: PublishWorkflow, WriteArticle, PublishArticle, EnrichIdea, DiscoverIdeas, DistributeArticle. The one that does the most work is PublishWorkflow, eight idempotent steps deep: validate, ground, generate, clean, persist, index, cover, publish. Every I/O step is wrapped in:

const result = await step.do(

"generate-article",

{ retries: { limit: 3, backoff: "exponential" } },

async () => env.WRITER.generate(brief)

);D1 holds canonical state (editorial_brief.status transitions: dispatched → drafting → published / failed). Vectorize handles per-block embeddings (Gemini-2 768d, asymmetric retrieval format). Every paid model call flows through a single chokepoint, callAi(env, ctx, runner), which logs to ai_call_log with agent_id, workflow_instance_id, and idea_id for cost rollups, and enforces a daily $20 cap plus a per-instance $1 cap.

This is the 6-DO setup I documented earlier. The piece that matters for the Managed Agents migration is where retry logic lives. Two places:

- Workflow step retries (cheap, free if the underlying call succeeds): network blips, rate limits, transient 5xx from upstream providers.

- A hand-written "judge-and-retry" loop in

clean.ts: run Writer, run Critic, ifscore < 0.75, re-prompt with Critic notes, max 3 attempts.

Number two is what Managed Agents replaces. Number one stays.

The 280 lines SDK 0.100 lets me delete

The judge-and-retry loop in src/worker/workflows/publish/clean.ts is the cleanest mapping to outcomes. Today it's roughly 120 lines of orchestration: invoke Writer, invoke Critic against a rubric stored in editorial_brief.rubric_md, parse the score, branch on threshold, re-prompt with notes attached as a system block, repeat up to three times. The Critic itself is another 80 lines of Durable Object (hibernation hooks, per-instance SQLite for rubric history, idempotency keys per attempt). The per-attempt ai_call_log rollup in callAi.ts is another 40 lines, because each attempt logs separately and the dashboard rolls them up.

All three blocks collapse into one Managed Agents call:

const session = await anthropic.beta.managedAgents.sessions.create({

thread: { messages: [{ role: "user", content: brief.body_md }] },

outcome: {

rubric: brief.rubric_md,

evaluator: "claude-sonnet-4-5-20250929"

},

metadata: { brief_id: brief.id, workflow_instance: ctx.instanceId }

});

return { session_id: session.id, status: "pending" };That's it. The session runs to completion async on Anthropic's side. The outcome parameter does the work the Critic DO used to do: a separate evaluator agent grades the output against the rubric and re-runs the primary agent until it passes.

Code I add: one route at /api/admin/webhooks/managed-agents that verifies the whsec_… signature, switches on event.type === "session.outcome_evaluation_ended", and writes the result back to D1.

app.post("/api/admin/webhooks/managed-agents", async (c) => {

const raw = await c.req.text();

const event = await anthropic.webhooks.unwrap(

raw, c.req.header("anthropic-signature")!, c.env.WEBHOOK_SECRET

);

if (event.type !== "session.outcome_evaluation_ended") {

return c.json({ ok: true });

}

await c.env.DB.update(editorial_brief).set({

status: event.outcome.passed ? "published" : "failed",

body_md: event.result.body,

session_cost_usd: event.session.usage.total_cost_usd

}).where(eq(editorial_brief.id, event.session.metadata.brief_id));

return c.json({ ok: true });

});Forty lines, signature-verified, idempotent (keyed on brief_id).

Net diff: minus 240 lines of orchestration. Minus one Durable Object binding from wrangler.json. Minus one migration file. Plus 40 lines of webhook handling. Plus one secret in Cloudflare Secrets Store.

The polling endpoint at GET /api/admin/workflows/:id/status keeps working. It now reads session state from D1 (populated by the webhook) rather than from env.PUBLISH_WORKFLOW.get(id).status(). The UI doesn't change. The admin dashboard doesn't know the difference.

One thing to flag: the step.do retry wrappers stay on every other I/O step. Cover-image generation, Vercel revalidation, Cloudflare cache purge – none of those benefit from outcome rubrics, all of them benefit from Workflows' replay-safe step caching. Don't rip out what's working.

The one file I'm keeping, and why the cost math flips at scale

callAi.ts stays. Daily $20 cap, per-instance $1 cap. Every paid model call routes through it, whether the call is direct, via AI Gateway, or via Managed Agents.

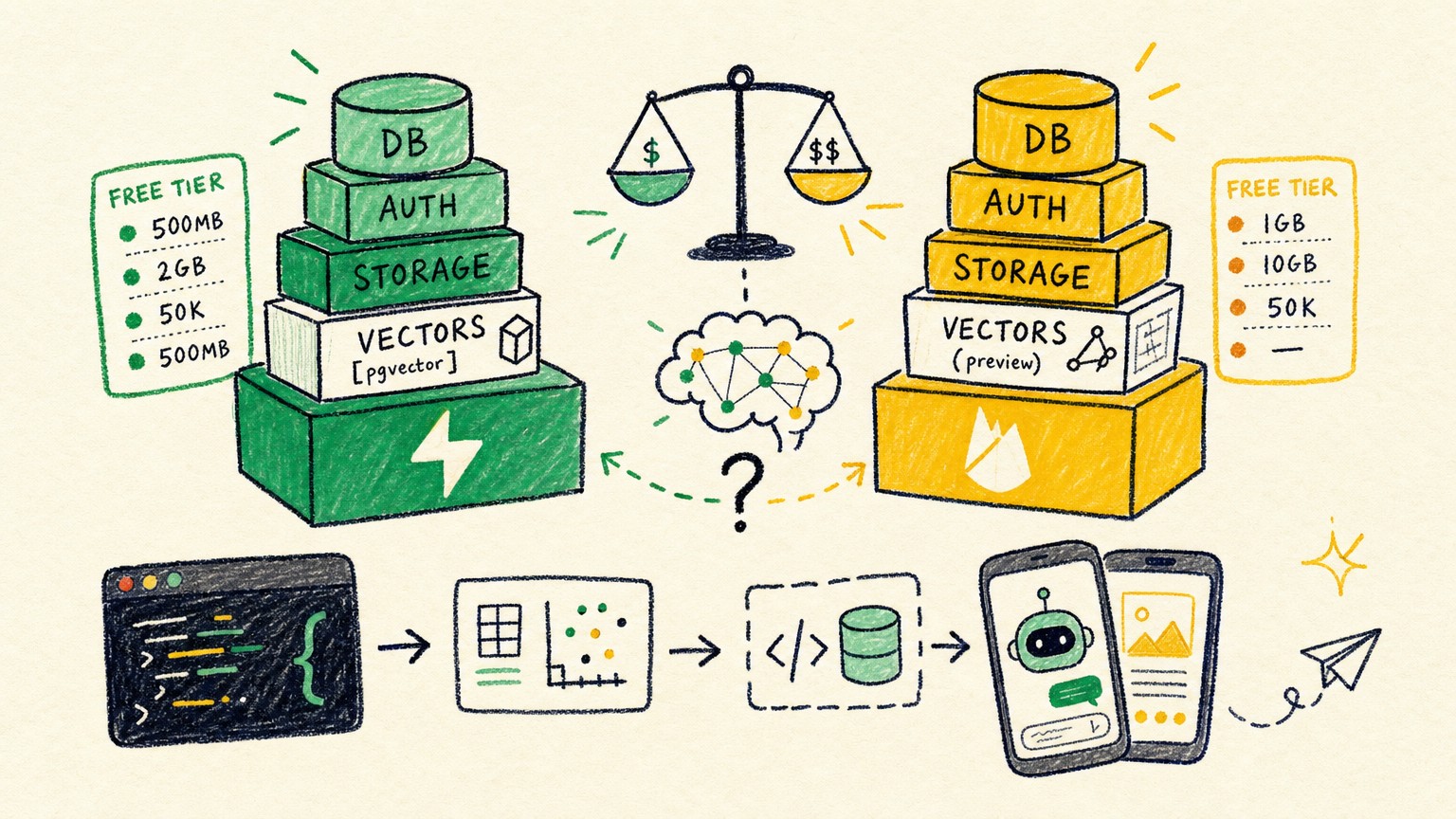

Here's why this matters. Managed Agents bills on three axes: tokens at standard pricing, plus $0.08 per session-hour, plus $10 per 1000 web searches. At my volume, six publisher routines, one article each per fire, around 3 minutes per session, that's about $0.004 per article in session-hour cost on top of tokens. Negligible.

The flip point is when sessions get long. A 4-hour Anthropic Skills session: $0.32 in runtime alone, on top of token cost. At 50 such sessions per day, that's $480 per month in pure session-hour billing before tokens. A multi-step research session that idles on a web search queue for an hour: $0.08 you wouldn't have paid before. Idle time on a session that's waiting for a downstream service: also billed (Anthropic's docs say to the millisecond, but the meter is running).

The chokepoint enforces a hard ceiling that the Managed Agents dashboard does not. The dashboard shows session cost after the fact. callAi.ts throws NonRetryableError mid-workflow before the session starts if the pre-flight estimate would breach the daily cap.

The pre-flight estimate is conservative:

async function estimateSessionCost(brief: Brief): Promise<number> {

const tokenEstimate = brief.body_md.length / 3.5;

const inputCost = (tokenEstimate / 1_000_000) * 3.0;

const outputCost = (tokenEstimate * 1.5 / 1_000_000) * 15.0;

const sessionHourEstimate = (brief.expected_minutes / 60) * 0.08;

const safetyMargin = 1.4;

return (inputCost + outputCost + sessionHourEstimate) * safetyMargin;

}The per-session-hour math is where solo-engineer setups go wrong fastest. Without the ceiling, a single runaway agent (exactly the kind of behavior the outcome retry loop can encourage when a rubric is too strict) eats the day's budget before you notice. I've seen it.

Keep the chokepoint. Route sessions.create() through it. Log the pre-flight estimate before the session starts. Reconcile against event.session.usage.total_cost_usd from the webhook payload when the session terminates.

The migration I'm not making yet: multiagent orchestration

Multiagent orchestration is the headline feature from Anthropic Dev Day. Lead agent splits the task, hands sub-tasks to specialist subagents with their own model, prompt, and tools. Subagents work in parallel on a shared filesystem. Lead aggregates. On paper, this is exactly what my Manager DO does today: dispatch to Writer, Discovery, Distribution in parallel via DO RPC, each with its own SQLite state, with R2 + D1 as the shared "filesystem."

Three reasons I'm holding.

One: API churn. Multiagent orchestration is in research preview, behind a separate access request. BetaManagedAgentsSearchResultBlock just landed in 0.102. The block-type surface for multiagent results is still moving. Migrating now means rewriting the integration on every SDK bump for the next two or three releases. The webhook surface stabilized in 0.100; multiagent is still on the move.

Two: shared filesystem is per-session and ephemeral. Inside a Managed Agents session, the shared filesystem disappears when the session terminates. R2 and D1 are durable across all my routines, queryable from any worker, and survive a session crash. Until shared filesystem becomes durable (or until I can mount R2 directly into the session sandbox), this is a feature regression for my workload.

Three: Anthropic's lead/subagent split is opinionated. Fixed lead-agent role. My Editorial DO is the lead some days (when a publisher routine fires) and a peer other days (when the admin UI dispatches a brief and Editorial joins the pipeline mid-stream). I'd be flattening a useful asymmetry to fit a hosted abstraction.

What flips me: when the shared filesystem becomes durable and cross-session (or when R2 mounts into the sandbox), the migration becomes attractive. Today it's a sidegrade, not an upgrade.

The exact migration checklist for SDK 0.100 to 0.102

Pin the SDK

Bashuv add anthropic@0.102.0Or

pip install anthropic==0.102.0if you're not on uv yet. Skip 0.100 and 0.101 unless you have a Bedrock-specific reason to stop at 0.101.Register the webhook in Claude Console

Claude Console → Settings → Webhooks → New endpoint. Point it at

https://your-worker.example.com/api/admin/webhooks/managed-agents. Copy thewhsec_…secret shown once at creation. Store it in Cloudflare Secrets Store (or AWS Secrets Manager if you're on Claude Platform on AWS via SDK 0.101).Add the webhook route

Create

/api/admin/webhooks/managed-agents. Verify the signature usingclient.webhooks.unwrap(payload, signature, secret)(helper landed in v0.95.x, stable in 0.100). The unwrap call handles HMAC, timestamp tolerance, and event-type parsing in one shot. Do not roll your own HMAC verification – the timestamp-skew window is non-obvious.Convert the evaluator step

Replace your judge-and-retry loop with

outcome={rubric: brief.rubric_md, evaluator: "claude-sonnet-4-5-20250929"}onsessions.create(). Drop your Critic DO + retry orchestration. Keep the rubric stored in D1; the rubric column becomes more important, not less.Switch from polling to D1-backed reads

Your status endpoint now reads from D1, keyed on

event.session.metadata.brief_id(or whichever correlation key you set inmetadataonsessions.create()). The webhook writes; the status endpoint reads. No moreworkflow.status()round-trips.Keep the cost chokepoint

callAi.tsstays. Pre-flight estimate session cost. ThrowNonRetryableErrorif the estimate breaches the daily cap. Log post-completion actuals fromevent.session.usagein the webhook handler. Reconcile pre-flight estimates against actuals weekly; tune the safety margin if estimates drift more than 25%.Test on the lowest-stakes routine first

Mine was

Discoverybecause it has no public-facing output if the migration glitches. Run it for 72 hours in parallel with the old path. Diff the outputs. Only then migrateWriter.

Can I use SDK 0.100 with the existing Anthropic Bedrock setup?

Yes, but the dedicated AWS client landed in 0.101. Upgrade past 0.100 if you're on Bedrock. SDK 0.101 also added ANTHROPIC_BEDROCK_SERVICE_TIER env var support (default/flex/priority), which 0.100 does not have.

Do webhooks replace the streaming response API entirely?

No. Webhooks fire on session lifecycle events (started, idled, terminated, outcome-evaluation-ended). Streaming responses still flow through messages.create(stream=True) and the regular delta protocol. Webhooks are for "tell me when the long-running session is done." Streaming is for "show me the tokens as they arrive."

What's the actual signing secret format and how do I verify it?

Prefix whsec_…, shown once at creation in Console. Verify via client.webhooks.unwrap(raw_body, signature_header, secret), which handles HMAC, timestamp tolerance, and event-type parsing in one call. Do not log the raw secret. Do not pass it as a query parameter. Cloudflare Secrets Store or equivalent.

Can I run outcomes evaluation without Managed Agents, just via direct Messages API?

No. outcome={rubric, evaluator} is a Managed Agents-only parameter on sessions.create(). You can build the equivalent yourself with the Messages API (which is what my Critic DO does today), but you pay for the orchestration latency and you maintain the code. The whole point of Managed Agents is that you don't.

Does prompt caching still apply inside a Managed Agents session?

Yes, and it cuts input costs by up to 90% on cache hits. Cache diagnostics for the prompt cache beta landed in 0.102, so you can see cache hit rates in the response payload now.

The migration is one afternoon for Discovery, one more for Writer, and the rest follows. Pin 0.102.0. Register the webhook. Delete the Critic DO. Keep the chokepoint.

Jun 2, 2026