Anthropic's $10K Solopreneur Grant vs. My $387/Month Stack

A line-item breakdown of the operating stack behind a working one-person AI company in May 2026, against the new Workday-Anthropic-LISC solopreneur accelerator that funded 15 founders this week. Real Cloudflare, AI Gateway, D1, R2,…

On May 12, Workday Foundation, Anthropic, and LISC announced $150,000 split across 15 solopreneurs – $10K cash, Claude credits, a curriculum, and BDO coaching. I run a six-agent AI publication solo. My actual monthly bill is $387.

What the 15 solopreneurs get on May 12

The package is clean on paper: $10,000 in cash per founder, a Claude credit allocation, the LISC AI-fluency curriculum, and coaching from LISC's Business Development Organization network. Fifteen seats. $150,000 in disclosed cash. The Claude credit envelope is undisclosed.

The curriculum covers what you'd expect a small-business operator to want: strategy, marketing, fulfillment, CRM, financial management. The BDO coaching is the part most operators undervalue.

Elizabeth Kelly, Anthropic's Head of Beneficial Deployments, framed it like this: "Solo founders are some of the country's most determined builders and often the most resource-constrained." That's correct. It's also the safe read. The harder read is that "resource-constrained" means different things at different rungs, and the program's design implicitly chose one.

The timing matters. The program lands the same week Dario Amodei is back in the press betting that the first billion-dollar solopreneur shows up in 2026. Anthropic isn't subtle about the throughline: if a single operator with Claude can run a business that used to need fifty people, then funding fifteen of them is a small bet to learn what the operating shape looks like. I think the bet is right. I also think the package is sized for the wrong constraint, and I'll show you what I mean with my actual bill.

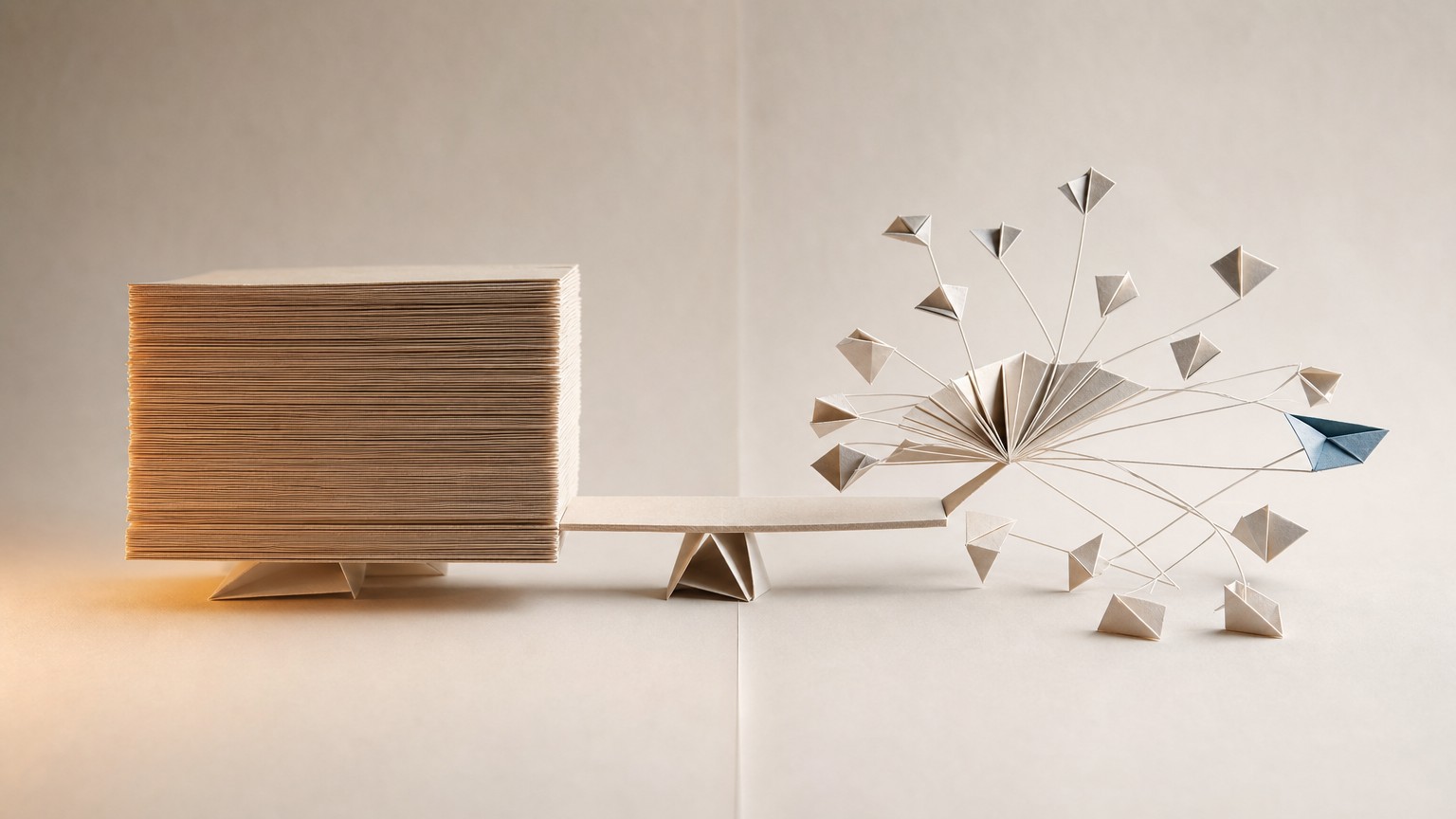

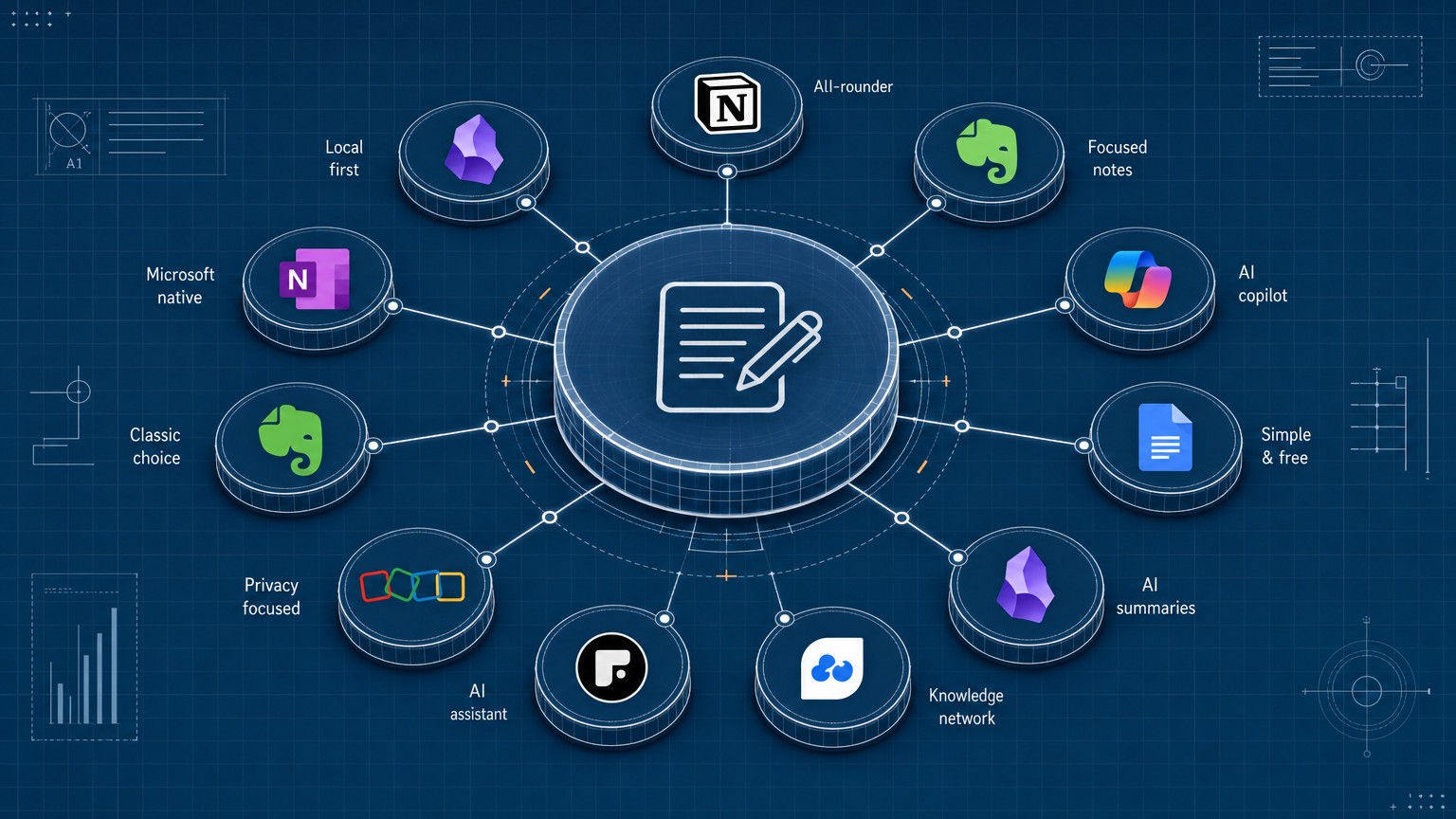

My actual $387/month stack, line by line

Here's the receipt for May. I publish daily through six agents on top of Cloudflare's edge, with Claude doing the heavy lifting and a small constellation of other providers handling specific jobs.

Infrastructure – Cloudflare side: $18/month combined.

Workers Paid is $5/month flat, which bundles 10M requests and 30M ms of CPU. I haven't come close. D1 is another $5/month base; my publication runs ~120M reads and ~800K writes per month across two databases, and the metered overage rounds to about $1. R2 holds ~30GB of cover images and database snapshots; with zero egress fees, the bill is ~$2/month. Vectorize stores ~50,000 embeddings at 768 dimensions for retrieval; that's ~$3/month with query volume included. AI Gateway itself is free up to 100,000 logged requests per day, which is well above my volume.

Inference – the actual cost center: ~$245/month.

Claude Sonnet 4.6 through AI Gateway runs the writer, the critic, and the fact-checker. Across roughly thirty publishes a month, with prompt caching hitting consistently at 0.1x input cost, that's ~$180/month. Claude Haiku 4.5 handles categorization, brief generation, and distribution metadata – cheap and fast, ~$25/month. Gemini embeddings via Google direct (the gateway adds no value on embedding traffic so I bypass it) come in under $2. Cover generation through gpt-image-2 runs ~30 covers/month at about $0.04 each, ~$1.20. Grok-4.3 via xAI, used for live research with web_search and x_search server tools, is the most variable line – ~$35/month in May.

Data and rendering: ~$72/month.

DataForSEO across nine endpoints feeds the research agents and runs ~$60/month at my volume. Browser Rendering's REST API for headless page operations is another ~$12/month.

Human-side tools: $52/month.

Vercel Pro for the public site is $20. Notion Plus single seat is $10. Claude Max for my own Claude Code and chat usage is $20. 1Password Families is $5. Domain registration and DNS amortize to about $2. Resend, Linear, and a few others sit on free tiers that comfortably cover solo use.

Total: $387/month.

Two things to notice. First, 78% of the bill is direct LLM inference. Second, the entire Cloudflare side of the stack – Workers, D1, R2, Vectorize, AI Gateway – is $18 combined. The compute-and-edge cost has been solved. The remaining pricing pressure on a solo operator's bill now sits almost entirely on inference, and that line moves with how aggressively you run agents, not with how many users you have.

If you want the fuller version of how the six agents coordinate, I wrote about the wiring here.

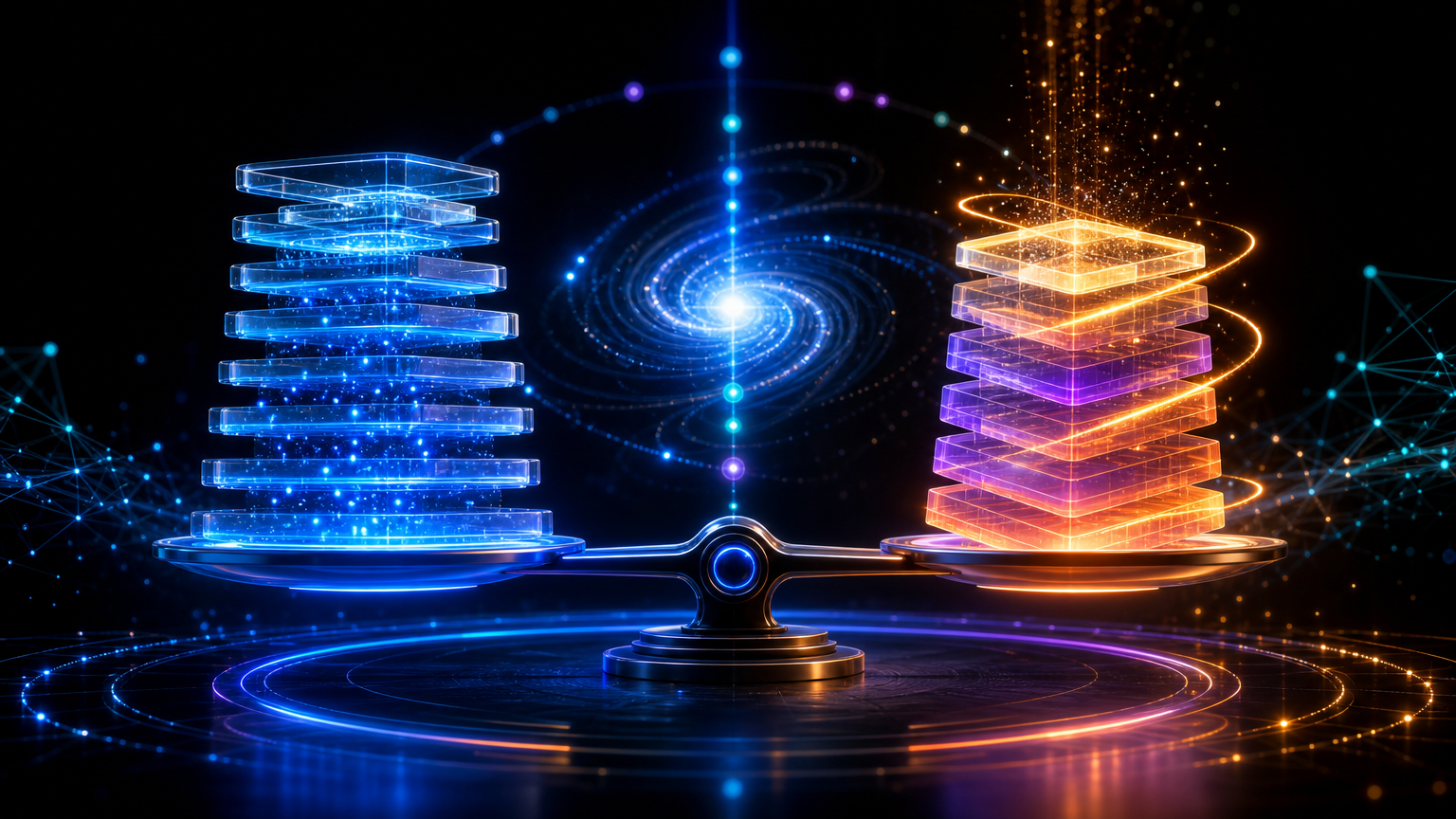

The $10K grant covers 26 months of this stack. So why does it matter what's missing?

The math is straightforward. $10,000 divided by $387 is 25.8 months. On paper, a recipient could run an operating stack like mine for over two years on the cash component alone, before touching the Claude credits.

That sounds like enough. It isn't, and the gap is instructive.

Missing line item one: distribution. The published LISC curriculum has no module on growing an audience from zero to first sustainable MRR. Coaches will talk about marketing – they're good at it for traditional small businesses – but the channel mix that works for a solo AI operator is different. The grant doesn't fund this. The curriculum doesn't teach it specifically. It's the largest invisible line item in the actual journey.

Missing line item two: the founder's burn. A solopreneur's operating cost is real but trivial against the founder's personal monthly outflow. Rent, food, health insurance in the US, the partner who is patient but not infinitely patient. The grant doesn't backfill this. If the recipient quits their job to take the program seriously, $10,000 is roughly two months of personal runway in most US metros. If they don't quit, the program's effect is bounded by evenings and weekends, which is exactly the constraint the program claims to be solving.

Missing line item three: inference scaling at usage spikes. "Limited Claude credits" is undefined. Production agents consume tokens unpredictably; one piece that gets traction can 10x monthly inference cost in a week as related agents fire – embedding new vectors, generating distribution variants, re-running retrieval at higher volume. Without a published credit ceiling, the program is implicitly capped at low-velocity builds. That's not bad; it's just a different shape of business than the billion-dollar solopreneur thesis implies.

The grant is generous and useful, particularly for a first-time builder who needs the cohort structure to ship anything at all. It is not the operating system of a billion-dollar solopreneur. Those are different problems, and the program is solving the first one.

The solopreneur cost curve, rung by rung

Here's the part most coverage of these programs gets wrong: the operating cost of a one-person AI company barely moves as revenue scales. The curve is sub-linear and the margin is preposterous.

At $0 MRR (build phase, months zero to three): $300 to $500 per month is a realistic stack for a serious operator. The goal at this rung isn't revenue – it's shipping the first asset that gets cited, indexed, or shared enough to start the flywheel. The dominant cost is inference, because you're building and rebuilding agents.

At $10K MRR: the same operating stack, plus $200 to $400 per month in distribution tests. Beehiiv or similar, small ad budgets to validate one or two channels, a LinkedIn presence the founder maintains. Total operating cost: $700 to $1,000 per month. Gross margin sits around 92%.

At $100K MRR: the stack climbs to $2,000 to $4,000 per month. More inference, larger retrieval indexes, higher transactional volume, real analytics infrastructure, a fractional CFO showing up monthly. Distribution climbs to $3,000 to $8,000 per month as channels stabilize. Margin holds at 88 to 90% because cost of goods is still dominated by inference, and inference per unit of output has been falling year over year.

At $1M ARR – roughly $83K MRR: the operating stack costs less per month than a single mid-level engineer's loaded compensation. This is the claim under Amodei's billion-dollar thesis. The math works.

There's an open problem the cost curve doesn't address. Solopreneurs at $1M ARR are still single points of failure – illness, burnout, a bus. The grant doesn't fund infrastructure that solves this. Neither does Claude itself, directly. What does is succession engineering: runbooks an outsider can execute, agents that self-restart on failure, code and prompts that don't carry founder-only context. That's the Phase 2 conversation and it's not happening publicly yet.

The curve is real.

What I would have done with $10K in 2024

Looking back at the version of me that started this publication, here's how I would have allocated $10,000 if it had landed in my account eighteen months ago.

60% into distribution experiments. $6,000 across newsletter list growth, sponsorship trials with three or four publications I respect, a part-time BDR running partnership outreach for ninety days. The single highest-return thing a solo AI operator can buy is audience compounding before the content does, and almost nobody funds it because it doesn't fit a coaching curriculum.

25% into a content moat. $2,500 into producing three flagship long-form assets – the kind of work that gets cited by AI search engines for years. Not blog posts. Research, datasets, reproducible benchmarks. The asset class that becomes a permanent referral surface.

10% into measurement infrastructure. $1,000 into analytics, attribution, retention loops. Most solopreneurs ship features in the dark for months before they put a single retention chart on the wall. The chart pays for itself in week two.

5% into the tools the curriculum won't teach. $500 into a properly licensed image generator credit pool, Better Auth for the inevitable login flow, Cloudflare credits beyond the free tier for staging environments. Small, boring, load-bearing.

What I would not have done is buy more Claude credits. At this stage of a solo operator's life, inference is the cheapest input. The constraints are taste, distribution, and time, in that order.

What to copy from the program design, what to skip

For anyone designing a v2 of a solopreneur accelerator, or for an operator deciding whether to apply to v1, here's the honest split.

Copy: the $10K cash component – real runway, no equity, no strings. The cohort structure – peer pressure does more work than any curriculum module. The AI-fluency curriculum as a baseline – first-time operators need it and won't seek it out otherwise. The BDO coaching network – the part that ages best, because operators forget what a P&L looks like at month seven.

Skip, or at least rethink: the Claude credits as the headline gift. By month four, a recipient who's shipping outgrows the credit allocation; by month four, a recipient who isn't shipping doesn't need it. It's the wrong constraint for the program's headline.

If I were designing v2, the package would be $25,000 cash, a fractional CFO for six months, a distribution coach who has grown a solo brand from zero, and an infrastructure credit pool that scales with revenue rather than capping at a fixed allocation. The cohort and curriculum stay. The headline becomes "we fund the parts you can't see," which is the real ask.

If you're building toward a Claude-heavy operating model regardless of whether you get into the program, I wrote a more detailed breakdown of the Claude for Small Business automation stack that pairs naturally with this one.

How much does an AI-native solopreneur actually spend per month?

For a working six-agent stack with daily publishing, my bill is $387/month. Most solo SaaS founders run leaner – $200 to $500/month is typical until $20K MRR.

Is the Workday-Anthropic-LISC accelerator worth applying to?

For first-time solopreneurs who haven't operated an AI company yet, yes – the curriculum and coaching matter more than the cash. For operators already shipping, the math doesn't pencil unless the distribution and peer-network components are the real differentiator for you.

What's the real cost of running production AI agents in 2026?

Between 75 and 80% of a solo operator's stack cost is direct LLM inference. Cloudflare-edge infrastructure – Workers, D1, R2, Vectorize, AI Gateway – is under $20/month combined at moderate volume. The compute side has been solved; pricing pressure now sits on inference and on distribution.

Can a single founder really hit $1M ARR with AI tools?

The operating math allows it. $1M ARR carries roughly $3,000 to $5,000/month in total operating cost, leaving 94%+ gross margin. The unsolved problem isn't unit economics – it's single-point-of-failure risk and the founder's own bandwidth.

The first billion-dollar solopreneur won't win because they had better Claude credits. They'll win because they figured out the three line items nobody is funding.

Jun 2, 2026