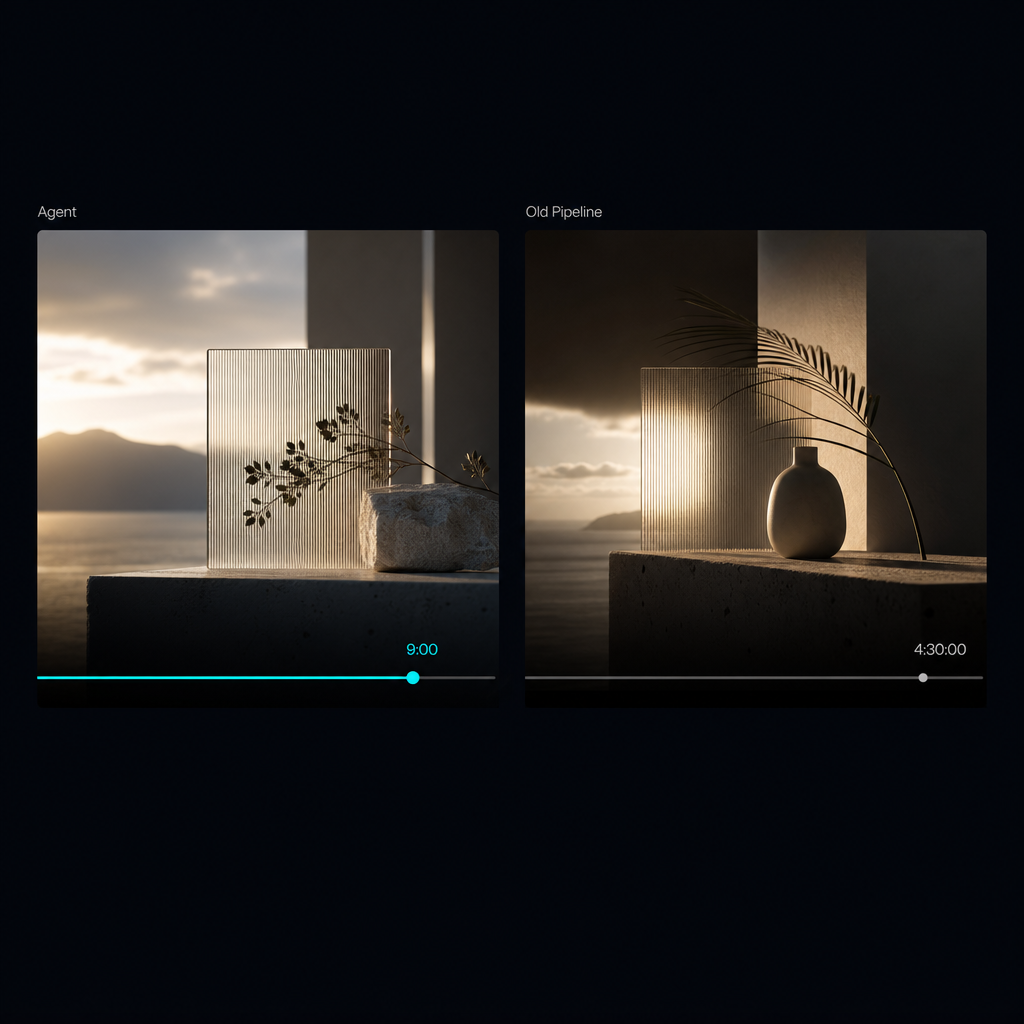

Runway Agent vs 4-Tool Pipeline: 9 min vs 4.5 hrs

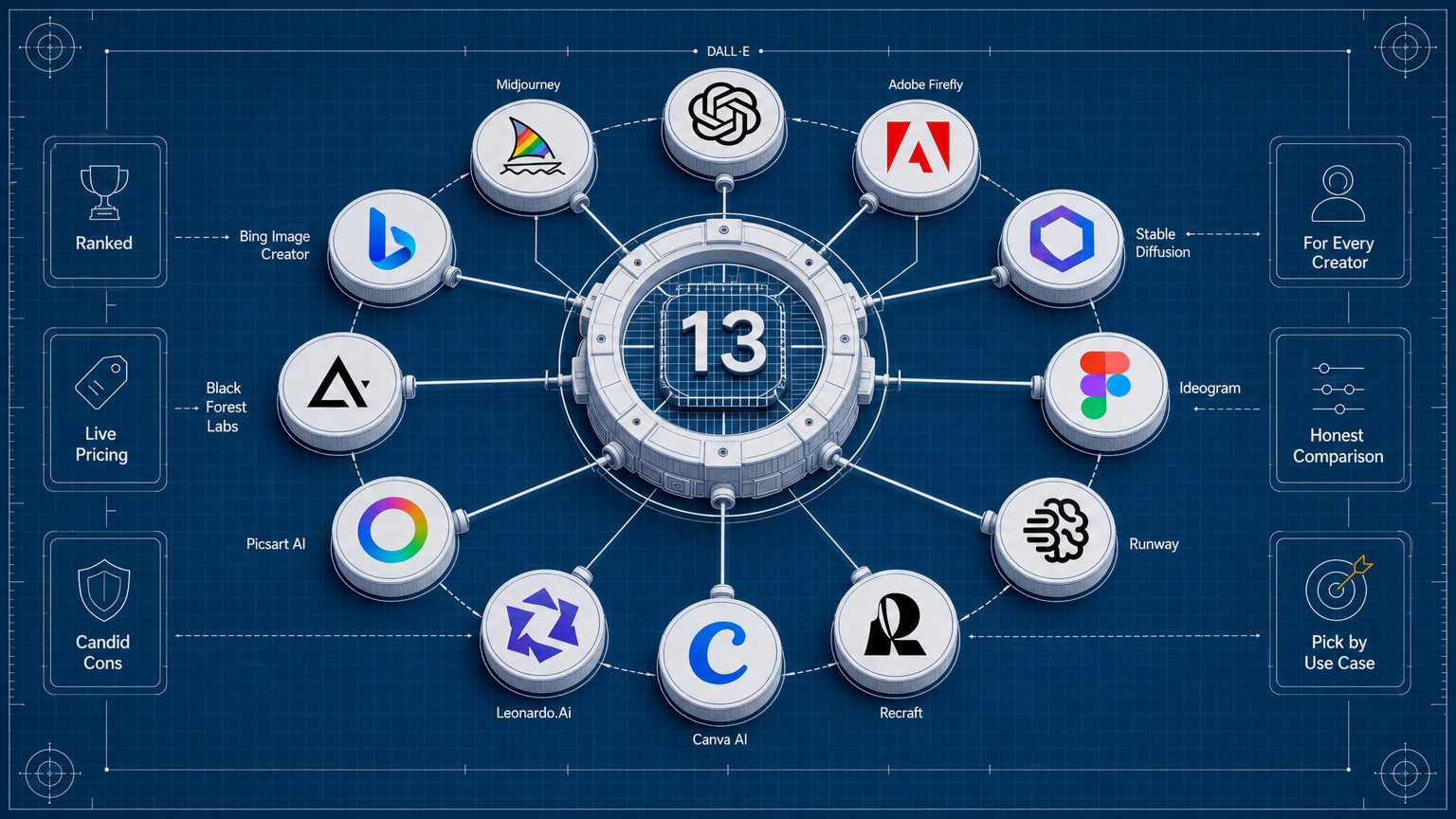

Runway Agent (launched May 13, 2026) compresses what used to be a four-tool brand-video pipeline — Midjourney + Runway Gen-4.5 + ElevenLabs + DaVinci — into a single conversation, with voiceover, dialogue, and music assembled in minutes.…

Runway shipped Runway Agent on May 13, 2026 – an agentic creative partner that takes a brand brief, proposes the concept, generates voiceover and music, and ships a multi-shot, publish-ready video in minutes. I ran my actual campaign brief through it twice, then through my old four-tool pipeline. Here's what got cut from $95/mo to $28/mo, and what still earns its seat.

The result: a 30-second brand teaser, both pipelines side by side

The brief was a 30-second soft-launch teaser for a UAE F&B brand I'm working with through DVNC.studio: three reference images (mood frame, brand palette swatch, product hero), a paragraph of voice notes, and two deliverables – 16:9 master and a 9:16 social cut.

Runway Agent time-to-publish: 9 minutes, including two conversation turns to refine pacing and palette.

Old four-tool pipeline time-to-publish: 4.5 hours wall time across Midjourney, Runway, ElevenLabs, and DaVinci.

The one-sentence verdict before I unpack the math: Agent is the new default for campaign-cadence content. The old pipeline still wins where the brand world is bespoke and the color story has to lock to hex.

What Runway Agent is, as of May 13, 2026

Runway frames Agent as an agentic creative partner. You describe a brief and the agent proposes a concept, story beats, and a full visual direction, then assembles a multi-shot video with native voiceover, dialogue, and music into a publish-ready cut. Reference images, aspect ratio, duration, and audio preferences are set up front. The timeline editor handles final-mile adjustments – trim, swap shots, export.

Runway's launch post names the target users explicitly: brand marketers, performance marketers, creative directors at agencies, social and content leads, comms teams running lean, indie filmmakers, and production teams running previs. That list is honest about who the tool is for. Runway is not pitching it at the senior film colorist. It is pitching it at the people shipping two to twelve cuts a month who used to assemble them across four tools.

Under the hood, the renders are Gen-4.5. The change is not the model. The change is that the model is now wrapped in a conversation that holds the whole pipeline.

The brief I ran, and how the conversation went

The opening prompt, paraphrased: "30-second soft-launch teaser for a premium UAE F&B brand. Three reference images attached: a golden-hour mood frame, a warm-neutral palette swatch with a single electric cyan accent, a hero product shot of the bottle on a stone surface. Tone: confident, slow, editorial. Off-screen narration, one line, English with neutral accent. 16:9 master. Cinematic, restrained."

Turn 1. The agent proposed a three-shot concept: a wide establishing shot of the stone surface, a medium push-in on the bottle, a slow pull-back revealing the brand mark. It wrote the narration line. It pre-selected a warm-toned music bed. I asked for a cooler color palette across shots two and three, and slower pacing on the push-in.

Turn 2. The agent revised the story beats and the shot order. The cyan accent appeared in the brand mark reveal, which was the read I wanted. I locked the direction.

Generation. Seven minutes to a 30-second 1080p MP4 with native voiceover and an ambient music bed already mixed under it.

Timeline editor. I trimmed two seconds off shot two, swapped the third beat for a tighter alternative the agent had generated as a variant, exported the 16:9 master, then cropped the 9:16 cut in the same editor.

What surprised me: voiceover prosody is good enough for social cuts. Not good enough for a 60-second hero film, but for an Instagram first frame and a TikTok hook, fine. The pacing of the cut points is competent – better than I expected from an agent picking them.

What broke: brand palette drifted in shot two. The reference image upload anchors the look, but it does not lock exact hex values. For a client where the cyan is the brand, plan on a color pass.

My old pipeline, four tools, 4.5 hours, $95/mo before this week

Here's the stack I was running through May 12.

Midjourney V8.1 Standard, $10/mo. Moodboard frames in roughly 25 minutes. V8.1's faster render helps the explore loop – you stay in flow instead of waiting on grids. This is where I authored the look before any motion work began.

Runway Unlimited, $76/mo annual or $95 monthly. Image-to-video and Gen-4.5 fills, Explore Mode for iteration, credits burned on the finals. About 90 minutes from selected stills to approved shots.

ElevenLabs Creator, $22/mo. Voiceover with a neutral accent option, around 12 minutes including a re-record because the first read landed too soft on the brand-name pickup.

DaVinci Resolve, free. Assembly, color, sound bed, export ladders for both 16:9 and 9:16. About 3 hours. This is where the brand palette gets enforced, the cut points get tightened, and the vertical audio mix gets rebalanced so the narration sits above the music bed on phone speakers.

Where the human direction was load-bearing in the old pipeline: brand palette enforcement across shots, the precise cut points between beats, and the audio mix on the 9:16 vertical. Cost per published teaser before the Agent landed at about $3.10 in compute credits, plus 4.5 hours of my time.

At a studio billing rate, the 4.5 hours is the real number.

The cost ladder: Pro at $28/mo with the RUNWAY50 code

Runway's current tiers, paid annually:

- Standard, $12/mo, 625 credits. Too tight for any campaign-cadence work. Fine for personal experiments.

- Pro, $28/mo annual or $35 monthly, 2,250 credits. 4K rendering, watermark-free exports, priority queue. This is the operator tier.

- Unlimited, $76/mo annual or $95 monthly, 2,250 credits plus Explore Mode. Explore Mode is unlimited at relaxed quality, but Veo 3.1 and Veo 3 stay credit-billed inside it.

RUNWAY50 is a 50%-off Pro promo for the first month at launch. It ends Monday, May 18.

For a Dubai studio doing the AED math: Pro annual at $28/mo is about AED 103/mo. With RUNWAY50 the first month lands near AED 51. I was spending roughly AED 350 across the four-tool stack.

The decision rule I landed on:

- Three or more campaign teasers per month longer than 30 seconds, the Agent wins.

- One bespoke campaign per quarter where the brand world is hand-built, the old pipeline still pays for the craft.

I'm running both, but the monthly spend dropped to Pro plus DaVinci (free) plus one Midjourney seat for moodboarding. The ElevenLabs Creator seat got paused.

What Runway Agent replaces in my stack, and what it doesn't

Replaces ElevenLabs Creator for non-bespoke voiceover. Social cuts, product reels, always-on content where you need a competent neutral read in under a minute. Not for a named voice clone or a specific Arabic dialect inflection, where ElevenLabs still wins decisively.

Replaces DaVinci Resolve for sub-60-second social-first cuts. The Agent's timeline editor handles trim, swap, and export without leaving the conversation. Not for any deliverable where the color story has to lock to brand hex – that still wants the Resolve pass.

Partially replaces Midjourney V8.1 for moodboarding. Reference image upload plus conversational refinement gets you within 80% of the explore loop. I'm keeping my Midjourney seat for the 20% where I'm authoring a brand world from scratch, but I'm using it less.

Does not replace bespoke brand-world development. The agent's concept proposals are competent. They are not distinctive. A senior creative director will read three proposals and recognize the median. Distinct work still comes from the human direction.

Does not replace human direction on cut points, palette fidelity, or audio mix on a vertical cut. Those are the things I still handled in the 9-minute Agent run, just inside the Agent's editor instead of inside DaVinci.

The conversation pattern that wins

After running the same brief through Agent twice and through three subsequent client briefs, the pattern that produces publish-ready output on the first or second turn:

- Upload reference images before describing the brief in prose. The agent anchors on visuals first, words second. Reverse that order and the concept proposal drifts.

- Use two or three conversation turns maximum. Past turn three, iterations start losing creative coherence and you burn credits chasing a direction that was right two turns ago.

- Lock aspect ratio and duration up front. Mid-flow changes lose the composition logic the agent built and cost credits to redo.

- Treat the timeline editor as final-mile only. Trim, swap, export. Do not try to restructure the story there.

- For 9:16 social, generate the 16:9 master first and crop in the editor. The agent composes better wide than vertical right now.

- When the brand palette matters, plan for a 10-minute DaVinci color pass on the master before exporting variants. Pretending this step is gone is how clients reject the deliverable.

That sixth point is the one that almost got cut from this piece because it sounds like a caveat. It is the most important rule.

The takeaway in design terms

Runway Agent is the first AI tool that meaningfully compresses the brand-video pipeline for social-cadence content. Nine minutes against 4.5 hours is not a marginal improvement. It is a different category of work.

What it does not do is replace the senior designer's job. It replaces the production layer below them. The 4.5 hours that got compressed were assembly hours, voiceover-pickup hours, export-ladder hours. The art direction was still 80% of the work, even at 9 minutes.

The work moves up. More time on brand-system definition, color-story authoring, narrative direction. Less time on cut points and voiceover takes. For a studio principal, that is a good trade.

The tool I dropped after one week of Agent use: ElevenLabs Creator for non-bespoke voiceover. The tool I'm keeping forever: DaVinci Resolve, because every bespoke client project still needs the human color pass.

If you're a designer-founder running two or more campaigns per month, run the RUNWAY50 code on your next brief before Monday, May 18. Worst case, you've spent AED 51 on a real comparison against your own pipeline. That's the only test that matters.

For the sister piece on the Webflow Premium pricing math I ran the same week, see Webflow Just Killed CMS and Business Plans for "Premium". For the paid-social side of the same campaign – the static ad-creative stack that runs against the teaser cuts – see the Meta Andromeda creative stack experiment and AdCreative.ai vs Pencil Pro on real spend.

Is Runway Agent available on the Standard plan or do you need Pro?

Available now across paid plans, but Pro at $28/mo annual ($35 monthly) unlocks watermark-free 4K exports and a priority queue. Both are load-bearing for client deliverables.

Does Runway Agent generate native audio, or does it just give you a silent video?

It generates the full package – voiceover, dialogue, and music bed – assembled into the multi-shot video before handing back to the timeline editor. You can swap the music bed in the editor if the first pass misses.

Can the Agent hold a specific brand palette across multiple shots?

Partially. Reference image upload anchors the look but does not lock exact hex values. Expect color drift in shot two or three and plan for a DaVinci color pass on high-craft deliverables.

How does Runway Agent compare to Higgsfield's Marketing Studio?

Runway Agent wins on the end-to-end single-conversation flow that does not require exporting to a separate editor. Different tools for different briefs.

When does the RUNWAY50 50%-off Pro discount expire?

Monday, May 18, 2026. If you're reading this on launch week, you have a window of days, not weeks.

Jun 2, 2026