14-Day GEO Playbook: Get Cited by ChatGPT & Perplexity

A 14-day execution plan for getting cited by ChatGPT, Claude, and Perplexity that ignores traditional keyword work and rebuilds around the four citation surfaces AI engines actually pull from: structured answer blocks, Reddit threads,…

Reddit drives 46.5% of Perplexity's citations. Ninety percent of ChatGPT's cited URLs sit outside Google's top 20.

The citation-share data that changes the budget question

The shift that matters is not "AI is reshaping search." That sentence is six quarters old. The shift that matters is where AI engines pull their answers from, because that determines which surfaces deserve marketing budget and which do not.

Three numbers carry the argument. First, 90% of the URLs ChatGPT cites in its answers come from outside Google's top 20 results. The AI engines are not just re-ranking Google – they are pulling from a substantially different corpus. Second, Reddit alone accounts for 46.5% of Perplexity's citations, with Wikipedia and primary-data sites taking most of the rest. Third, Conductor's 2026 survey of digital marketing leaders found that 97% report positive impact from GEO work, GEO has grown to roughly 12% of average digital marketing budgets, and 32% of leaders rank it as their top priority for the year.

Read those three numbers as a budget question. If half of Perplexity's citations come from Reddit threads, and most of ChatGPT's come from sources Google does not rank, then the surfaces that win AI citations are not the surfaces most teams optimize. The backlink-and-keyword playbook is not wrong; it is incomplete in a way that is now measurable. The teams winning citation share in 2026 are reallocating roughly 12% of total digital spend to four content surfaces that did not exist as line items two years ago.

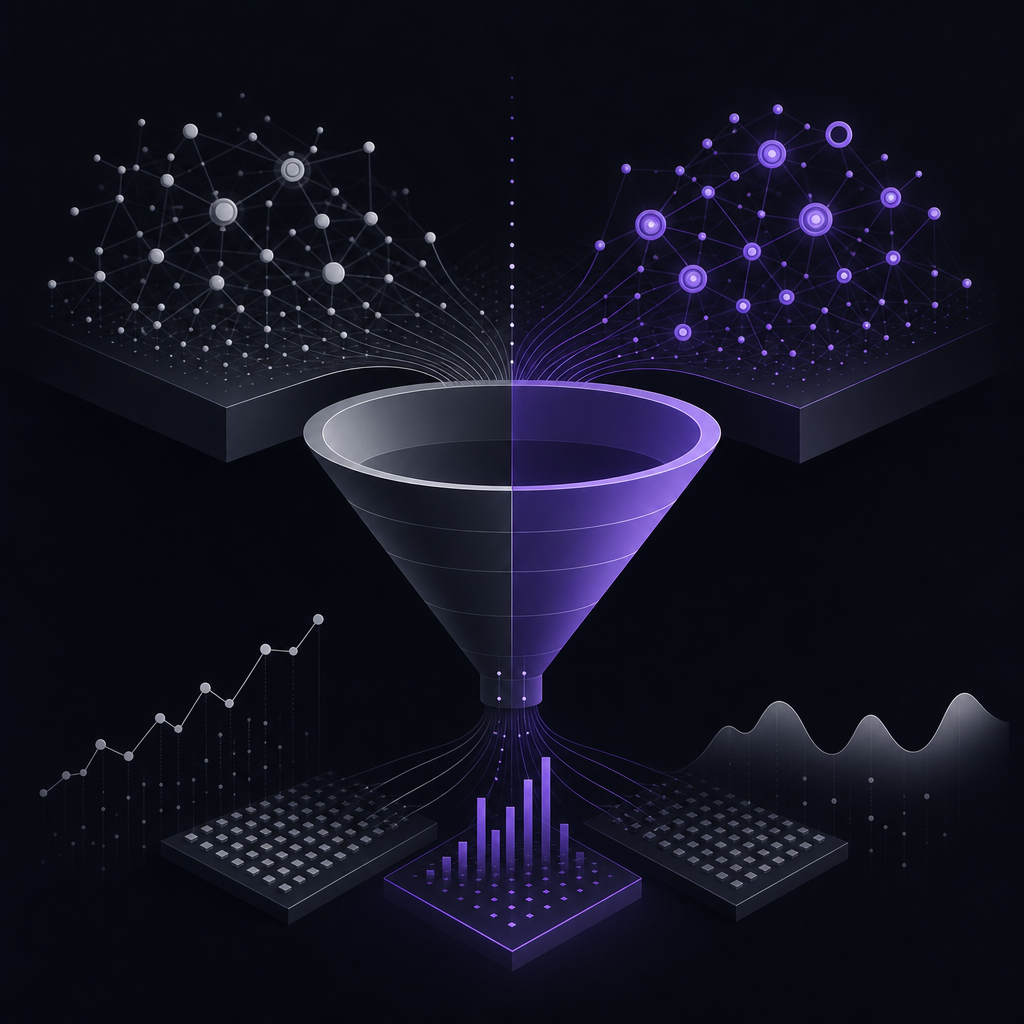

The 4-surface model: where AI engines actually find their answers

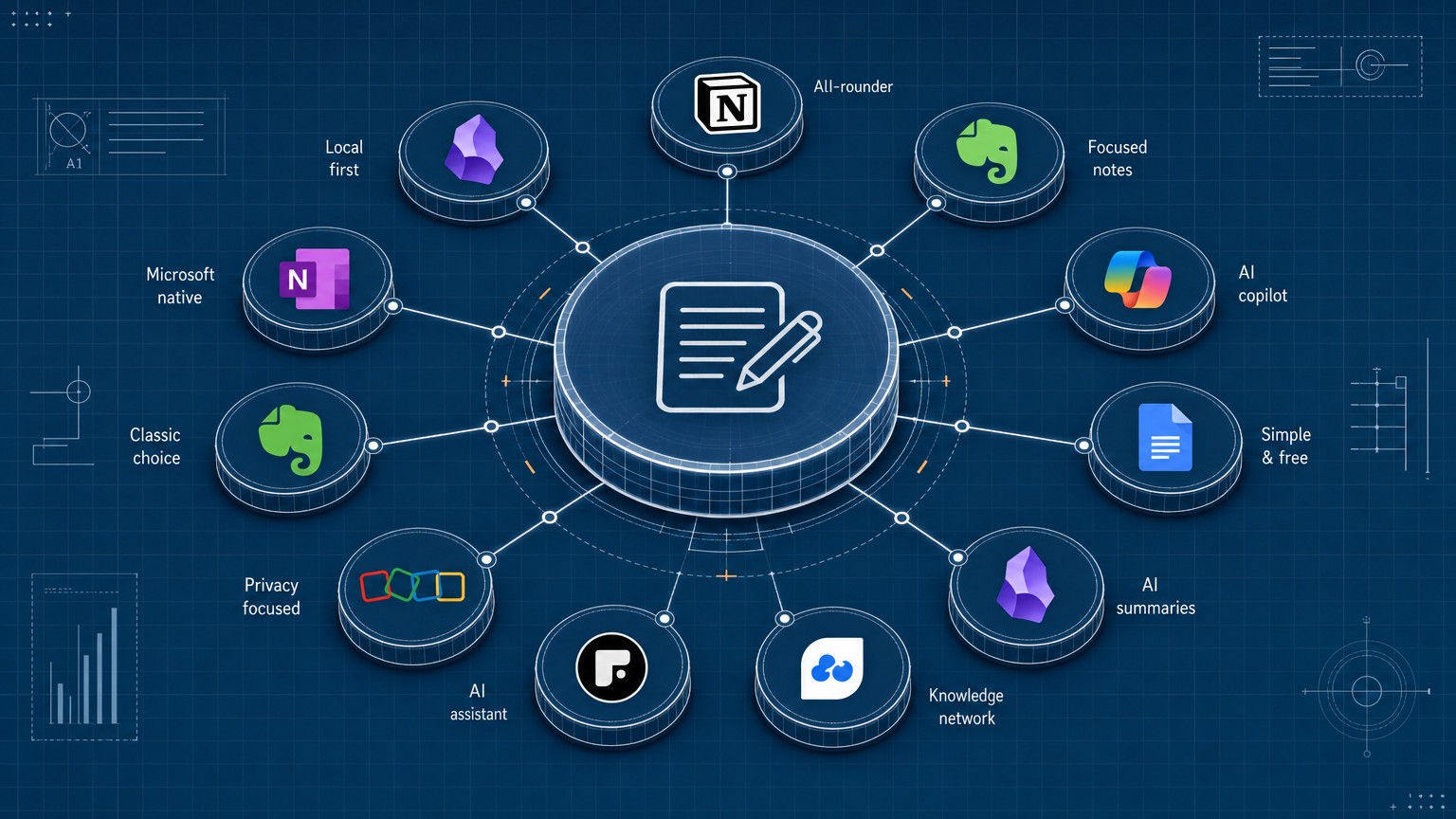

Every AI search engine – ChatGPT, Claude, Perplexity, Gemini, Google AI Overviews – pulls its citations from some weighted combination of four surfaces. The weights differ by engine. The surfaces do not.

Surface 1: extractable structured blocks on your own pages. AI engines reward content that answers the question in the first 50 words, uses question-shaped H3 headings, and ships structured data the model can lift cleanly. This is the Bottom-Line-Up-Front (BLUF) pattern. It is the single highest-impact change most pages need.

Surface 2: community signal. Reddit, Quora, Hacker News, niche industry forums, Stack Overflow for technical categories. Perplexity weights this heaviest. ChatGPT weights it moderately.

Surface 3: original data and primary research. Wikipedia, industry reports, original studies, first-party benchmarks. This is the surface that earns long-term durability – original data gets cited for years, opinion gets cited for weeks.

Surface 4: freshness signal. Pages older than 14 days without updates show roughly a 23% drop in citation rate across the major AI engines. This is a recency penalty, not a quality penalty – the model has no way to distinguish an evergreen 2024 page from a stale one, so it weights recent content higher.

The engine-to-buyer mapping matters here. If your buyer uses Perplexity for technical research, you cannot ignore Reddit. If your buyer uses ChatGPT for product comparison, you cannot ignore extractable comparison tables on your own pages. Pick your weights based on which engine your category lives on, not on which engine has the highest brand recognition.

The GEO measurement stack (with real prices)

You cannot manage what you cannot count, and most teams are counting wrong. Here is the actual tool ladder.

Otterly.AI at $29/month is the entry point. It tracks visibility across ChatGPT, Perplexity, and Google AI Overviews with prompt-based monitoring. For a solo operator or a team under five, this is enough to baseline citation share for 15-20 buyer-intent prompts and watch the trend line move week over week.

Siftly is a pure-play AI mention tracker. No public pricing – you book a demo. Narrower scope than Profound but stronger fidelity on the engines it covers. Worth the call if your category lives heavily on one or two engines.

Profound at $499/month is the enterprise tier. It tracks 10+ engines including Grok and Meta AI, ships a Conversation Explorer for spotting hallucinations about your brand, and is the tool most legitimate in-house teams use once they cross $5K/month in content spend. The price is justified when the alternative is making strategic bets blind.

Ahrefs Brand Radar at $828/month all-in (Ahrefs at $129 plus the Brand Radar $699 bundle) is where most marketing teams land by default because Ahrefs is already in the stack. This is a mistake. A documented review tracked a brand that received 123 ChatGPT mentions over a measurement window; Brand Radar reported 3. That is 97.5% under-reporting on the engine that matters most for B2B buyers. Brand Radar is fine for blue-link brand monitoring. It is not a GEO measurement tool yet, regardless of how it is positioned.

The selection rule: pick the tool that maps to the engine that maps to your buyer. Not the loudest brand, not the one already in your stack. For most teams, $30 to $500 per month is enough – measurement budget is not the constraint.

Days 1-3: citation-share audit and content extractability pass

Day 1 – baseline audit. Write 15-20 prompts a real buyer would type into ChatGPT, Claude, Perplexity, and Google AI Overviews. Mix navigational, comparative, and transactional intent. Run each prompt against each engine. Log every cited URL by hand into a spreadsheet – domain, surface (own site / Reddit / Wikipedia / competitor / publication), engine. If your budget is under $30/month, this manual pass is your measurement system for the sprint.

Day 2 – Citation Share of Category. Calculate, per engine, what percentage of total citations across your 20 prompts go to your domain versus each competitor versus community surfaces. This is your starting number. The point of the sprint is to move it.

Day 3 – extractability pass on your top 5 pages. For each page, rewrite the first 50 words as a direct answer to the page's primary question. Convert dense H2 sections into question-shaped H3s ("How does generative engine optimization work?" not "GEO Fundamentals"). Ship the JSON-LD triple stack: FAQPage schema for the FAQ section, HowTo schema where you have procedural content, Article schema with datePublished and dateModified populated.

The BLUF pattern is the single change with the highest ROI per minute spent. If you only do one thing in Days 1-3, do that.

Days 4-9: Reddit and community seeding without spamming

Day 4 – subreddit mapping. From your Day 1 audit, extract every Reddit URL Perplexity and ChatGPT cited. Note the subreddit. You will typically find 5-7 subreddits that account for the bulk of citations in your category. These are your targets. Do not invent a list from intuition – use the audit.

Days 5-7 – genuine first-person answers. Find recent threads (under 30 days old) in your target subreddits where someone is asking a question your product or content answers. Post a real answer. First person, specific, no link in the first post unless explicitly asked. If the thread has 10+ comments already, your answer needs to be substantively better than what is there or it will be ignored.

The mechanics that work: lead with the BLUF answer (Reddit rewards the same extractability pattern AI engines do), share a specific number or named tool, link only when an existing thread asks for a resource. The mechanics that fail: posting from a new account, dropping a link in the first reply, copy-paste answers across multiple subreddits within the same week.

Days 8-9 – re-audit Reddit-cited mentions. Re-run your 20 prompts. Track the delta in Reddit-citation rate where your brand or your content is now the cited source. Even modest movement here – going from 0 Reddit citations to 2 across 20 prompts – is a meaningful share shift on Perplexity.

The honest part: this surface is slow. Citation share from Reddit seeding usually shows up in days 10-14 of the sprint, not days 8-9. Some teams will see no movement until the next sprint cycle. Stay with the pattern.

Days 10-14: the freshness loop and the listicle-stack pattern

Day 10 – republish your two highest-traffic pages. Update with 2026 data, add dated section headers ("Updated November 2026" inside H2s where appropriate), refresh stat citations, and change dateModified in your JSON-LD. This defends against the 14-day citation decay directly. If the page already cites a 2024 stat, replace it with the equivalent 2026 number. Recency is the easiest signal to ship and the most under-prioritized.

Days 11-12 – ship one new listicle in your category. Listicles get cited because they are extractable by construction. The pattern that works: BLUF intro, 7-12 items, each item with an H3 in question or claim form, the JSON-LD triple stack, at least one piece of original data (a chart, a benchmark, a survey result, a screenshot from your own analytics with numbers visible). Original data is the differentiator – it gets your listicle cited instead of the seven other listicles already in the model's training data.

Days 13-14 – end-of-sprint re-audit. Re-run the same 20 prompts. Capture Citation Share of Category per engine. Compare to Day 2. Document which surface drove the biggest delta – own-site extractability, Reddit seeding, freshness, or original data. That ratio tells you where to weight Sprint 2.

What didn't move and where the lift is fake

Three things I tracked across multiple sprints that did not materially move citation share inside a 14-day window.

Backlink building. New referring domains acquired during the sprint did not correlate with citation lift. AI engines weight community signal, freshness, and extractability more heavily than referring domain count for the queries I tested. Backlinks still matter for blue-link SEO. They are not the lever for GEO inside a two-week sprint.

Pure keyword optimization. Adjusting title tags, meta descriptions, and on-page keyword density on existing pages produced no measurable citation movement for transactional intent. It moved blue-link rankings modestly. The two systems are decoupling.

Brand Radar's reported numbers. I tracked the same brand across Brand Radar and a manual prompt audit for three weeks. Brand Radar reported 8 ChatGPT mentions; the manual audit found 134. Acting on Brand Radar's data would have meant concluding GEO work was not moving the needle when it was. A measurement tool that under-reports by 95%+ is worse than no tool, because it lets you draw confident, wrong conclusions.

The repeatable principle

AI search is a citation game, not a ranking game. The four surfaces – extractable own-site content, community signal, original data, and freshness – are durable inputs. They will keep mattering as engines, weights, and tools change.

Run the sprint quarterly. One-off audits decay inside the next freshness window. A 14-day sprint repeated every 90 days compounds: each sprint widens your Citation Share of Category against competitors who are still running keyword-only programs. Pick your measurement tool by the engine that maps to your buyer, accept that your in-stack default (Brand Radar, for most teams) is probably the wrong choice for this job, and treat Reddit as a frontline channel, not a side project.

The next Monday-morning move: schedule Day 1. Write the 20 prompts. Run them this week. The audit takes 90 minutes and tells you exactly what to ship for the next 13 days.

How does generative engine optimization work?

GEO structures content so AI engines like ChatGPT, Claude, Perplexity, and Gemini cite it as a source when generating answers. It prioritizes extractability (BLUF answers, question-shaped headings, JSON-LD), community signal (Reddit, niche forums), original data, and content freshness over traditional backlinks and keyword density.

Will GEO replace SEO?

No. They are complementary surfaces. SEO drives clicks from blue-link search results. GEO drives citations inside AI-generated answers. The two surfaces increasingly overlap but use different ranking inputs, and most B2B categories still see the majority of measurable traffic from blue-link search in 2026.

How do you get citations from Perplexity specifically?

Lead with the answer in the first 50 words (BLUF pattern), structure content with question-shaped H3 headings, build topical authority across 5-10 related pages on the same theme, seed answers in the 5-7 subreddits Perplexity cites in your category, and refresh content inside a 7-14 day window to defend against the 23% recency decay.

Is Perplexity good for measuring citation share?

Yes – it is the highest-citation-density engine in the market, citing 5-7 sources per answer on average and weighting Reddit and original data heavily. That density makes it the strongest measurement surface for tracking GEO progress sprint over sprint, even if your buyer primarily uses a different engine.

Is SEO dead or evolving in 2026?

Evolving. Conductor's 2026 data shows GEO at roughly 12% of marketing budgets and 32% of leaders ranking it as their top priority – but blue-link search still drives the majority of measurable traffic for most B2B categories. The right framing is reallocation, not replacement.

Jun 2, 2026